Hello {{first_name|Motivated and Miffed Community}},

This week’s vibe: the AI race is quietly turning into an industrial project. The “winners” aren’t just shipping smarter models—they’re locking in budget, hardware, and workflow leverage that compounds.

✅ TL;DR

🏗️💸 AI capex is ballooning again—Big Tech is treating infrastructure like destiny, not a line item.

🧑💻⚙️ Coding models are getting agentic—less “autocomplete,” more “delegate a task and steer.”

🧠🔌 Nvidia’s numbers say the boom isn’t “overheated”… it’s still accelerating, even if investors flinch.

🎭🏦 Italy’s central bank is warning the public about AI deepfakes featuring its governor.

Today’s Sponsor

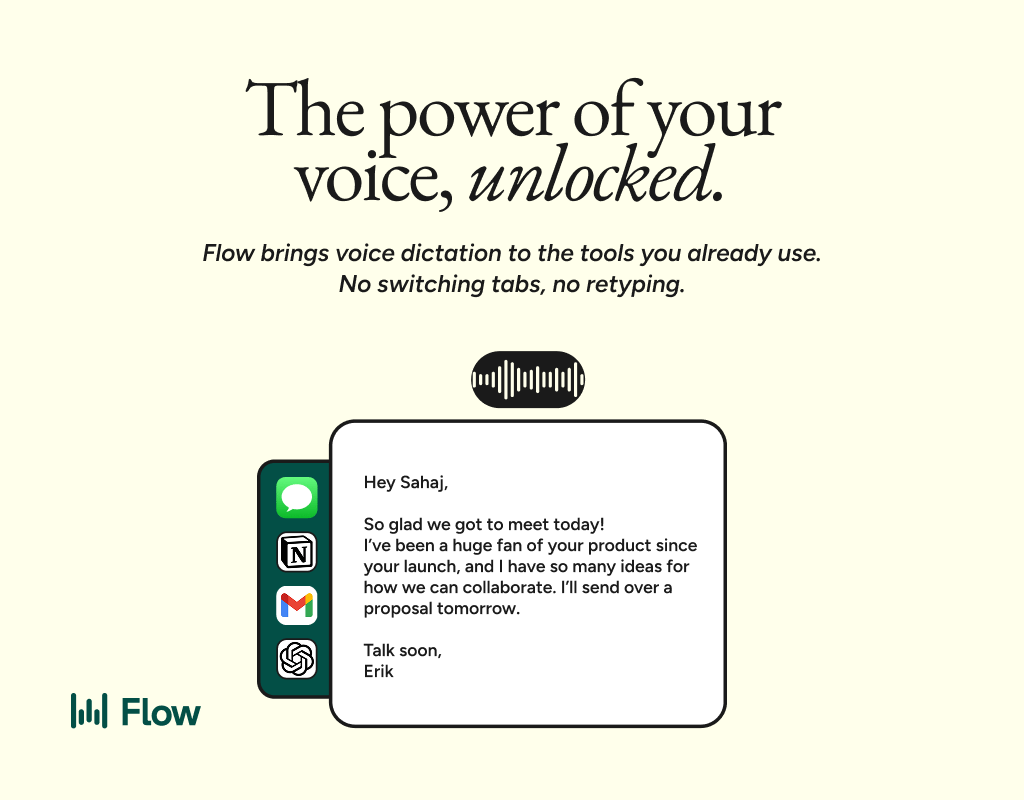

Speak naturally. Send without fixing.

Wispr Flow turns your voice into clean, professional text you can send the moment you stop talking. Not rough transcription you have to clean up. Actual polished text - ready for email, Slack, or any app.

Reid Hoffman sends 89% of his messages with zero edits using Flow. Millions of people worldwide have made it part of how they work, including teams at OpenAI, Vercel, and Clay.

Speak the way you think. Go on tangents. Change your mind mid-sentence. Flow strips the filler, fixes the grammar, and gives you text that reads like you spent five minutes writing it.

Works on Mac, Windows, iPhone, and now Android - free and unlimited on Android during launch.

🧠 AI News

1) Big Tech’s AI infrastructure bill: ~$650B in 2026

Bridgewater estimates Alphabet, Amazon, Meta, and Microsoft could collectively invest about $650B this year to scale AI infrastructure—up from $410B in 2025.

The most important part isn’t the number—it’s what it signals: AI is being managed like a long-cycle build (power, chips, networking, data centers), not a short-cycle feature race. When budgets harden, roadmaps harden.

This also pressures everyone else downstream: if you’re a model company, you need guaranteed access to compute; if you’re an enterprise buyer, you’ll increasingly “buy the stack” (cloud + tooling + model + governance) because procurement hates uncertainty.

Why it matters: In the next phase, differentiation isn’t only model quality—it’s secured capacity and distribution. That’s how moats get poured.

2) OpenAI ships GPT-5.3-Codex: faster, more agentic coding

OpenAI introduced GPT-5.3-Codex, positioning it as their most capable agentic coding model to date—combining stronger coding performance with reasoning and “steerable” longer-running work.

This is another step in the shift from “write me a function” to “own this problem until it’s done.” The practical implication: teams that treat these tools like junior collaborators (clear specs, tests, staged rollouts, code review discipline) will compound velocity; teams that treat them like “magic autocomplete” will compound bugs.

Expect the next fight to move up a layer: not IDE features, but integration—repos, tickets, CI, security policy, and permissions—so the agent can safely act without becoming an internal incident generator.

Why it matters: The best coding model is increasingly the one that fits your workflow and your risk posture—not the one that demos best on day one.

3) Nvidia posts record results—Data Center is the whole story

Nvidia reported record quarterly revenue (~$68.1B) and record Data Center revenue (~$62.3B), underscoring how thoroughly AI infrastructure demand is driving the company’s growth.

The market’s mixed reaction is almost the point: even when fundamentals scream “demand,” investors still ask “how long can this last?” But the earnings narrative reads like a supply chain reality check—if compute is the bottleneck, the bottleneck owners print.

Also worth tracking: the inference era keeps creeping in. Training is still massive, but the companies that win deployment at scale (latency, cost, reliability) will shape the next spend wave—sometimes in surprising places (networking, memory, specialized silicon, power management).

Why it matters: Even if AI hype cycles wobble, the infrastructure build-out looks increasingly like a multi-year replatforming of computing itself.

🤯 Crazy AI News

Italy’s central bank issues a deepfake warning (yes, really)

The Bank of Italy warned the public about fabricated articles/images/videos featuring Governor Fabio Panetta, including content that appears to show him on TV or promoting investments—some created with AI deepfake techniques. The bank said it filed a complaint with judicial authorities.

This is the part of the AI era nobody “opted into”: institutional trust is becoming a security perimeter. The scam isn’t just “send money”—it’s “borrow credibility,” then scale it.

Why it matters: We’re moving from misinformation as a content problem to misinformation as an identity problem—and the defenses look less like fact-checking and more like authentication, provenance, and rapid-response comms.

How would you rate this newsletter?

👋 That’s All

This week rhymed: budget → capability → trust. The buildout keeps getting bigger, the tools keep getting more agentic, and the social layer keeps getting more fragile.

Stay MOTIVATED,

Gio